Design Methods

Executable design principles, failure cause analysis, core selection logic, and key engineering tradeoff dimensions for building a defensible audit and forensics capability.

2.1 Executable Principles & Bases

Effective audit and forensics design is grounded in a set of engineering principles that are both actionable and verifiable. Each principle below includes the conditions under which it applies and the technical or legal basis that justifies it. These principles should be treated as mandatory design constraints, not aspirational guidelines — each has a documented failure mode when violated.

| # | Principle | Applies When | Basis |

|---|---|---|---|

| 1 | Single Authoritative Time: Enforce redundant NTP, monitor skew, quarantine bad-time sources | Multi-source correlation is required | Engineering best practice + audit defensibility |

| 2 | Normalize Before You Analyze: Define canonical event schema, version it, and test parsers | Multiple vendors/formats exist | Operational reliability |

| 3 | Encrypt in Transit, Verify at Rest: mTLS for logs; hash/sign for vault objects | Evidence integrity is required | Security + legal chain of custody |

| 4 | Least Privilege + Separation of Duties: SOC analysts cannot delete/alter vault objects; storage admins cannot read evidence without dual control | Always | Internal control framework |

| 5 | Record the Recorder: Audit all actions on SIEM/vault (admin logins, policy changes, exports) | Always | Meta-audit requirement |

| 6 | Evidence Should Be Reproducible: Maintain tool versions, hash of forensic tools, and runbook versions attached to cases | Forensic investigations | Repeatability and defensibility |

| 7 | Pre-Position Forensic Points: Egress PCAP, bastion sessions, endpoint triage scripts, config snapshots | Before incidents occur | Forensic readiness |

| 8 | Tiered Retention: Hot/warm/cold design based on query frequency and legal requirements | Storage cost optimization needed | Cost vs. utility tradeoff |

| 9 | Data Minimization & Privacy: Capture only what is needed; mask sensitive fields; enforce access logging | PII or regulated data in scope | Compliance (GDPR, HIPAA, etc.) |

| 10 | Design for Failure: Disk spool, queue replay, multi-AZ storage, DR drills | Always | Resilience engineering |

| 11 | Schema Completeness KPIs: Track mandatory field presence; treat missing fields as defects | Ongoing operations | Quality management |

| 12 | Export Is a Controlled Process: Evidence export must be signed, logged, and approved; include verification guide | Legal or compliance export required | Admissibility requirements |

2.2 Failure Cause → Recommendation

Understanding the failure mechanisms that undermine audit and forensics capabilities is essential for proactive design. The table below maps each failure mechanism to its observable consequence and the recommended avoidance strategy. These failure patterns are derived from real-world incident response engagements where evidence was challenged or unusable.

| Failure Mechanism | What Happens | Avoidance / Recommendation |

|---|---|---|

| No unified NTP | Timelines disputed; events cannot be sequenced across sources | Enforce dual NTP sources, alert on skew >1s, quarantine sources with excessive drift |

| Logs lack user/asset mapping | Cannot attribute actions to specific individuals or systems | Integrate CMDB + IAM, enrich on ingest, enforce mandatory fields for user ID and asset ID |

| Admin bypasses bastion | No proof of privileged action; "admin did not do it" disputes | Enforce network ACLs so admin access is only possible via bastion; verify monthly |

| SIEM hot retention too short | Lose early-stage indicators; cannot reconstruct attack origin | Size storage for minimum 30–90 days hot; review retention against incident dwell times |

| Evidence can be altered | Integrity challenged; evidence rejected in proceedings | WORM/object lock + signed manifests; dual control for retention policy changes |

| Packet capture everywhere | Cost explosion, privacy violations, storage overrun | Selective PCAP at egress/critical zones with triggers; define capture scope in policy |

| Parsing drift after upgrades | Fields change silently; correlation breaks without warning | Schema registry + parser tests per vendor version; run canary before full rollout |

| Forensics tools not controlled | Results not repeatable; tool integrity questioned | Tool hash catalog + controlled versions; documented procedures attached to cases |

2.3 Core Design / Selection Logic

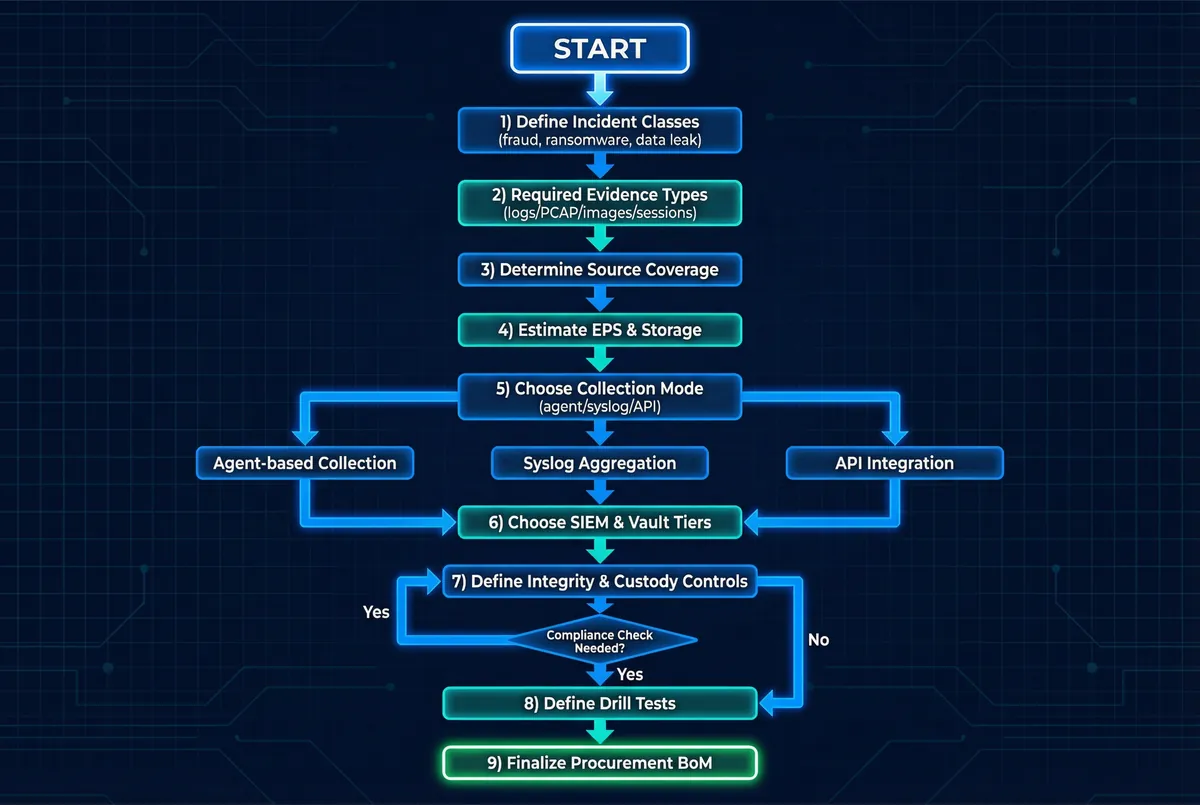

The design and selection process follows a structured eight-step decision framework that transforms business risk requirements into a concrete technical implementation. This framework ensures that every design decision is traceable to a specific threat class or compliance requirement, enabling clear justification during audits and procurement reviews.

Decision Steps

- Identify threat/incident classes and legal/compliance drivers (fraud, ransomware, data leak, insider threat, regulatory audit).

- Define minimum evidence set per class: identity logs + endpoint telemetry + network flows + privileged sessions + configuration diffs.

- Map evidence to sources and collection methods; flag unsupported systems as gaps requiring isolation or replacement.

- Capacity sizing: estimate EPS, PCAP Mbps, storage tiers, and retention periods using the calculators in Chapter 9.

- Choose platforms: SIEM/log platform, vault with immutability, PAM/bastion, EDR/NDR as needed for coverage gaps.

- Define custody workflow and role separation: who can collect, who can seal, who can export, who can verify.

- Define acceptance tests and recurring drills: what constitutes a successful evidence reconstruction, and at what frequency.

- Produce BoM + implementation plan + O&M runbooks with KPIs and escalation paths.

2.4 Key Engineering Dimensions

Every audit and forensics design involves tradeoffs across multiple engineering dimensions. The table below summarizes the key dimensions, what to optimize for each, and practical design tactics that balance competing requirements. Understanding these tradeoffs helps teams make informed decisions when budget, timeline, or operational constraints require prioritization.

| Dimension | What to Optimize | Practical Design Tactics |

|---|---|---|

| Performance / UX | Fast search, fast evidence export | Hot index sizing, fielded search optimization, evidence packaging automation |

| Stability / Reliability | No data loss under any condition | Disk buffering, queue replay, HA collectors (N+1), multi-AZ storage, DR drills |

| Maintainability | Easy upgrades without evidence gaps | Schema versioning, CI tests for parsers, canary deployments, rollback procedures |

| Compatibility / Extensibility | Add new sources without redesign | Standard connectors (syslog/TLS, OCSF, CEF), API-first design, schema registry |

| Lifecycle Cost (LCC) | Control long-term total cost | Tiered retention (hot/warm/cold), compression, sampling policies for low-value logs |

| Energy / Green | Minimize unnecessary compute overhead | Efficient storage tiering, right-size compute, decommission unused parsers |

| Compliance / Certification | Pass audits without major findings | Immutable storage proofs, access logs, policy evidence packages, drill records |